The Quiz-Me Skill.

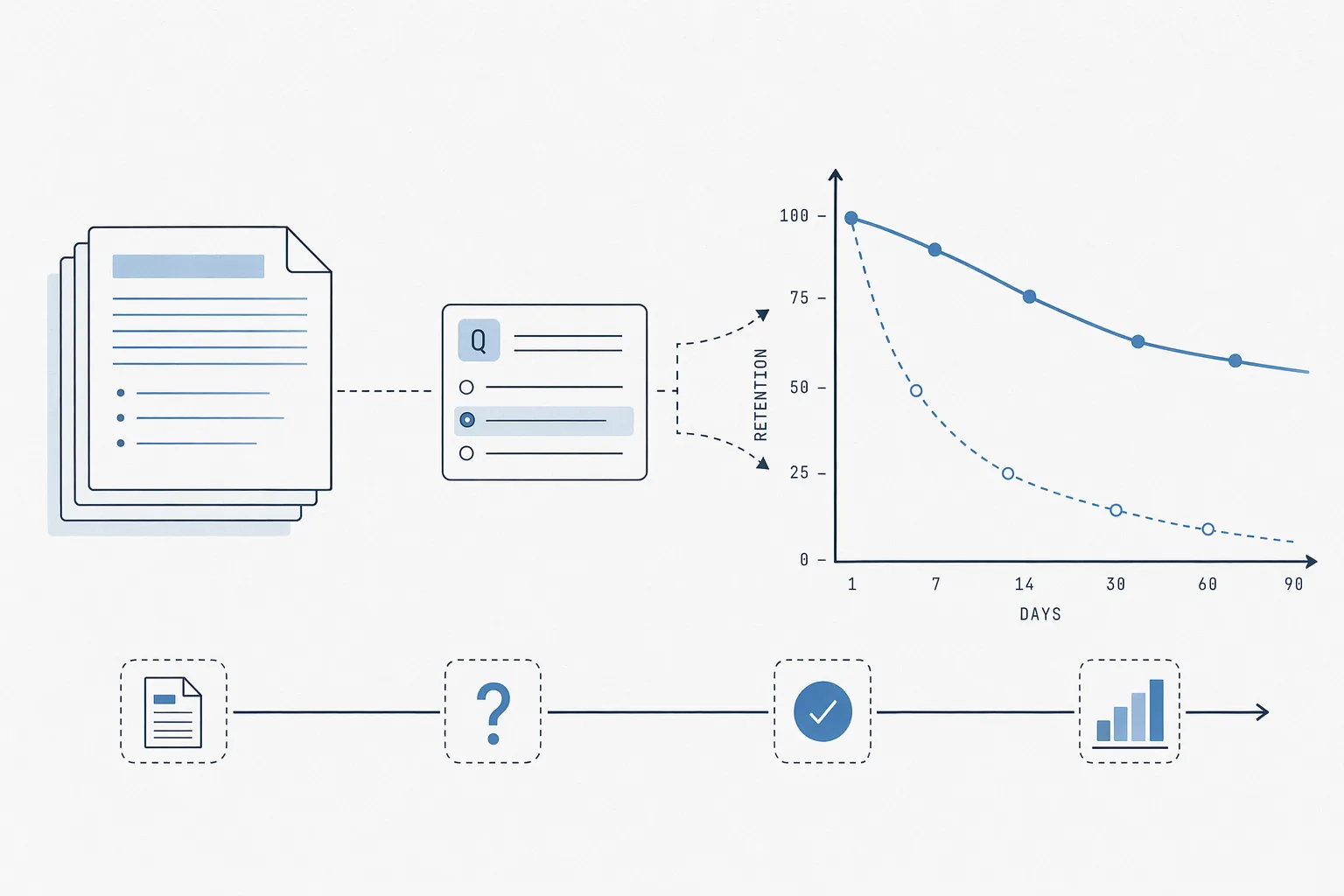

Reading documentation once doesn't make you fluent in it.

Reading documentation once doesn't make you fluent in it. I built a quiz-me skill because I needed to be able to answer questions about a private operating document on the spot, without pulling up the reference doc, and re-reading the material every two weeks wasn't moving the needle.

You can download this skill from github.com/bogdanbaciu21/skills.

How it works

The skill loads the reference material — in my case, a private policy document plus the engine source code that operationalized it — and generates 10 or 20 questions on the fly. It presents them all at once. I answer all of them in a single message. It grades each answer by checking the reference doc first, then the code if there's a discrepancy — the code is the source of truth. Each row of the results table records the question number, my answer, the verdict, and the correct answer, with a one-line explanation when I got it wrong.

Correct = 2 points, partial = 1, wrong = 0. Divide by 2 for a score out of 20.

Modes

- No argument: full 20-question quiz across all topic areas

quick: 10-question rapid-fireweak: weights 70% of questions toward the topics I've historically scored lowest ongrade: skips the quiz and shows progress over time- Any topic name: focuses the quiz on that area

The weighted-weak mode is the one that actually compounds. Without it I was getting lucky on the topics I already knew and never drilling the gaps.

Progress tracking

Every session writes to ~/.claude/quiz-progress.json: date, round number, score, per-topic breakdown, weak areas, strong areas. Over four rounds I could see exactly which topic I kept missing and which ones had actually stuck.

The progress file is the whole point — it turns a snapshot into a trend, and the trend is where the learning signal actually lives. A one-shot quiz tells you what you don't know right now. A progress log tells you whether studying is working.

Cross-referencing source of truth

The underlying policy had a reference doc, but the engine code evolved faster than the doc did. When the skill grades an answer, it cross-references both — and if I argue a grading decision, the rule is to re-check the engine code before overruling, because the engine is the source of truth.

This is the pattern that generalizes. If you're quizzing against a document that might be stale, name the authoritative source, and wire the skill to check it.

Generalizing

The original version was domain-specific, but the pattern transfers to any body of knowledge you need to internalize: a codebase's architecture, a regulatory framework, a product spec, a language's idioms. The ingredients are:

- A reference document (source of truth)

- A topic taxonomy (so you can measure weakness per area)

- A progress file (so you can see the trend)

- A weak-mode that biases toward gaps

Four files and a skill. Cheaper than flashcards, and the quiz adapts to what you still don't know.

Clean examples that do not involve confidential material:

- quizzing yourself on a private codebase's architecture

- learning a regulatory or compliance framework

- internalizing a product spec before a launch review